Introduction

In a world flooded with information, efficiently accessing and extracting relevant data is invaluable. ResearchBot is a cutting-edge LLM-powered application project that uses the capabilities of OpenAI’s LLM (Large Language Models) with Langchain for Information retrieval. This article is like a step-by-step manual on crafting your own ResearchBot and how it can be helpful in real life. It is like having an intelligent assistant that finds the information you need from a sea of data. Whether you love coding or are interested in AI, this guide is here to help you empower your reaserch with a tailored LLM-Powered AI Assistant. It’s your journey to unlocking the potential of LLMs and revolutionizing how you access information.

Learning Objectives

- Understand the more profound concepts of LLMs(Large Language Models), Langchain, Vector Database, and Embeddings.

- Explore real-world applications of LLMs and ResearchBot in fields like research, customer support, and content generation.

- Discover best practices for integrating ResearchBot into existing projects or workflows, improving productivity and decision-making.

- Build ResearchBot to streamline the process of data extraction and answering queries.

- Stay updated with the trends in LLM technology and its potential for revolutionizing how we access and use this information.

This article was published as a part of the Data Science Blogathon.

Table of contents

What is ResearchBot?

ResearchBot is a research assistant powered by LLMs. It is an innovative tool that can quickly access and summarize content, making it a great partner for professionals across different industries.

Imagine you have a personalized assistant that can read and understand multiple articles, documents, and website pages and provide you with relevant and short summaries. Our ResearchBot aim is to reduce the time and effort necessary for your research purposes.

Real-World Use Cases

- Financial Analysis: Stay updated with the latest market news and receive quick answers to financial queries.

- Journalism: Gather background information, sources, and references for articles efficiently.

- Healthcare: Access current medical research papers and summaries for research purposes.

- Academics: Find relevant academic papers, research materials, and answers to research questions.

- Legal Research: Retrieve legal documents, rulings, and insights on legal issues swiftly.

Technical Terminology

Vector Database

A Container for storing vector embeddings of text data is crucial for efficient similarity-based searches.

Semantic Search

Understanding user query intent and context to perform searches without depending entirely on perfect keyword matching.

Embedding

A numerical representation of text data that allows efficient comparison and search.

Technical Architecture of the Project

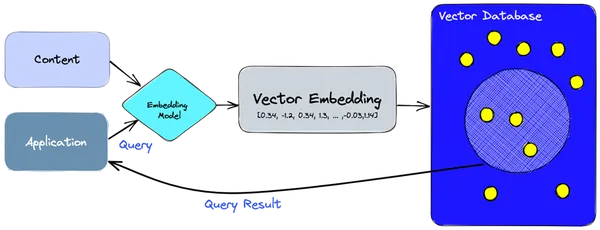

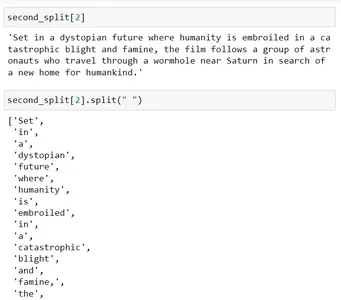

- We use the embedding model to create vector embeddings for the information or content we need to index.

- The vector embedding is inserted into the vector database, with some reference to the original content the embedding was created from.

- When the application issues a query, we use the same embedding model to create embeddings for the query, and use those embeddings to query the database for similar vector embeddings.

- These similar embeddings are associated with the original content that was used to create them.

How does the ResearchBot Work?

This Architecture facilitates storage, retrieval, and interaction with content, making our ResearchBot a powerful tool for information retrieval and analysis. It leverages vector embeddings and a vector database to facilitate quick and accurate content searches.

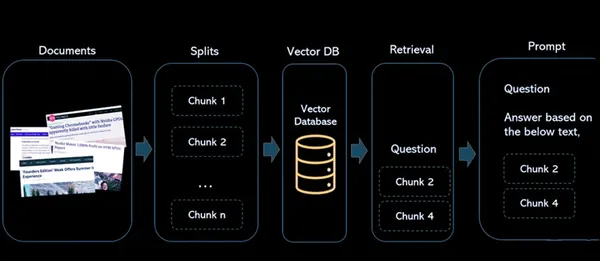

Components

- Documents: These are the articles or content that you want to index for future reference and retrieval.

- Splits: This handles the process of breaking down the documents into smaller, manageable chunks. This is important for working with large documents or articles, ensuring they perfectly fit in the constraints of the language model and for efficient indexing.

- Vector Database: The vector database is a crucial part of the architecture. It stores the vector embeddings generated from the content. Each vector is associated with the original content it was derived from, creating a link between the numerical representation and the source material.

- Retrieval: When a user queries the system, the same embedding model is used to create embeddings for the query. These query embeddings are then used to search the vector database for similar vector embeddings. The result is a big group of similar vectors, each associated with its original content source.

- Prompt: It is defined where the user interacts with the system. Users input queries, and the system processes these queries to retrieve relevant information from the vector database, providing answers and references to the source content.

Document Loaders in LangChain

Use document loaders to load data from a source in the form of document. A Document is a piece of text and associated metadata. For example, there are document loaders for loading a simple .txt file, for loading the text contents of articles or blogs, or even for loading a transcript of a YouTube video.

There are many types of Document Loaders:

| Loader | Usage |

|---|---|

| TextLoader | Loads plain text documents for processing. |

| CSVLoader | Imports data from CSV files. |

| DirectoryLoader | Reads and loads content from directories. |

| UnstructuredHTMLLoader | Fetches and processes unstructured HTML content. |

| JSONLoader | Loads data from JSON files. |

| UnstructuredMarkdownLoader | Processes and loads unstructured Markdown content. |

| PyPDFLoader | Extracts text content from PDF files for further processing. |

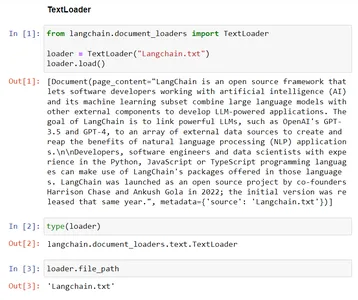

Example – TextLoader

This code shows the functionality of a TextLoader from the Langchain. It loads text data from the existing file, “Langchain.txt,” into the TextLoader class, getting ready it for further processing. The ‘file_path’ variable stores the path to the file being loaded for future purposes.

# Import the TextLoader class from the langchain.document_loaders module

from langchain.document_loaders import TextLoader # consider the TextLoader class by mentioning the file to load, Here "Langchain.txt"

loader = TextLoader("Langchain.txt") # Load the content from provided file ("Langchain.txt") into the TextLoader class

loader.load() # Check the type of the 'loader' instance, which should be 'TextLoader'

type(loader) # The file path associated with the TextLoader in the 'file_path' variable

loader.file_path

Text Splitters in LangChain

Text Splitters are responsible for splitting up a document into smaller documents. These smaller units make it easier to work with and process the content efficiently. In the context of our ResearchBot project, we use text splitters to prepare the data for further analysis and retrieval.

Why do we need text splitters?

LLM’s have token limits. Hence we need to split the text which can be large into small chunks so that each chunk size is under the token limit.

Manual approach of splitting the text into chunks

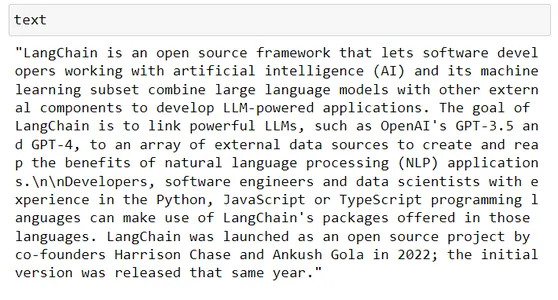

# Taking some random text from wikipedia

text # Say LLM token limit is 100, in our code we can do simple thing such as this text[:100]

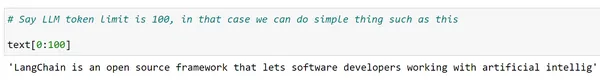

Well but we want complete words and want to do this for entire text, may be we can use Python’s split function

words = text.split(" ")

len(words) chunks = [] s = ""

for word in words: s += word + " " if len(s)>200: chunks.append(s) s = "" chunks.append(s) chunks[:2]

Splitting data into chunks can be done in native python but it is a tidious process. Also if necessary, you may need to experiment with the multiple delimiters in an consecutive way to ensure that each chunk does not exceed the token length limit of the respective LLM.

Langchain provides a better way through text splitter classes. There are multiple text splitter classes in langchain that allows us to do this.

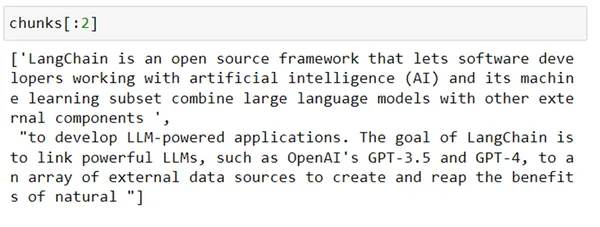

1. Character Text Splitter

This class is designed to split text into smaller chunks based on particularize separators. Like paragraphs, periods, commas, and line breaks(n). It is more useful for breaking down text into a mix of chunks for further processing.

from langchain.text_splitter import CharacterTextSplitter splitter = CharacterTextSplitter( separator = "n", chunk_size=200, chunk_overlap=0

) chunks = splitter.split_text(text)

len(chunks) for chunk in chunks: print(len(chunk))

As you can see, all though we gave 200 chunk size since the split was based on n, it ended up creating chunks that are bigger than size 200.

Another class from Langchain can be used to recursively split the text based on a list of separators. This class is RecursiveTextSplitter. Let’s see how it works.

2. Recursive Text Splitter

This is a kind of text splitter that operates by recursively analyzing characters in a text. It attempts to split the text by different characters, iteratively find different character combinations until it identifies a splitting approach that effectively divides the text and different types of shells.

from langchain.text_splitter import RecursiveCharacterTextSplitter r_splitter = RecursiveCharacterTextSplitter( separators = ["nn", "n", " "], # List of separators chunk_size = 200, # size of each chunk created chunk_overlap = 0, # size of overlap between chunks length_function = len # Function to calculate size,

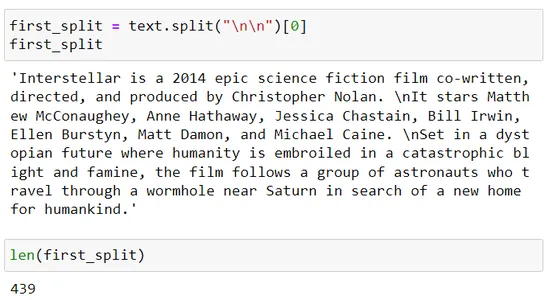

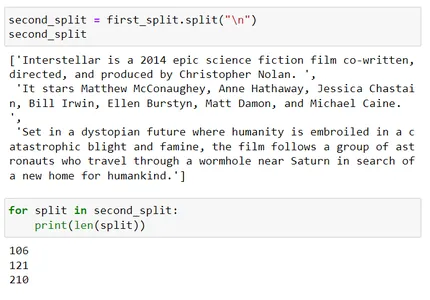

) chunks = r_splitter.split_text(text) for chunk in chunks: print(len(chunk)) first_split = text.split("nn")[0]

first_split

len(first_split) second_split = first_split.split("n")

second_split

for split in second_split: print(len(split)) second_split[2]

second_split[2].split(" ")

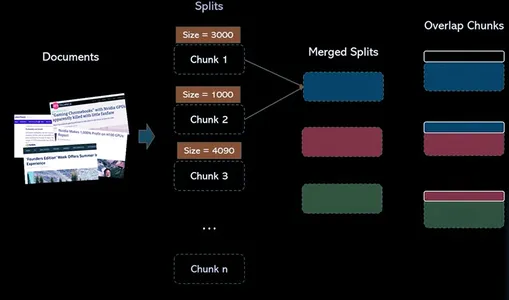

Let’s understand how we formed these chunks:

Recursive text splitter uses a list of separators, i.e. separators = [“nn”, “n”, “.”]

So now it will first split using nn and then if the resulting chunk size is more than the chunk_size parameter which is 200 in this scene, then it will use the next separator which is n.

Third split exceeds chunk size 200. Now it will further try to split that using the third separator which is ‘ ‘ (space)

When you split this using space (i.e. second_split[2].split(” “)), it will separate out each word and then it will merge those chunks such that their size is close to 200.

Vector Database

Now, consider a scenario where you need to store millions or even billions of word embeddings, it would be the important scene in a real-world application. Relational databases, while capable of storing structured data, may not be suitable due to their limitations in handling such more amounts of data.

This is where Vector Databases come into play. A Vector Database is designed to efficiently store and retrieve vector data, making it suitable for word embeddings.

Vector Databases are revolutionizing information retrieval by using semantic search. They leverage the power of word embeddings and smart indexing techniques to make searches faster and more accurate.

What’s the Difference Between a Vector Index and a Vector Database?

Standalone vector indices like FAISS (Facebook AI Similarity Search) can improve search and retrieval of vector embeddings, but they lack capabilities that exist in one of the db(database). Vector databases, on the other hand, are purpose-built to manage vector embeddings, providing multiple pros over using standalone vector indices.

Steps:

1 : Create source embeddings for the text column

2 : Build a FAISS Index for vectors

3 : Normalize the source vectors and add to index

4 : Encode search text using same encoder and normalize the output vector

5: Search for similar vector in the FAISS index created

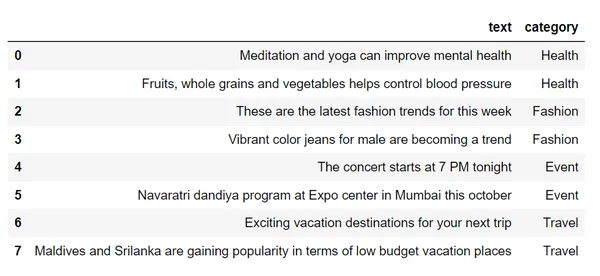

df = pd.read_csv("sample_text.csv")

df # Step 1 : Create source embeddings for the text column

from sentence_transformers import SentenceTransformer

encoder = SentenceTransformer("all-mpnet-base-v2")

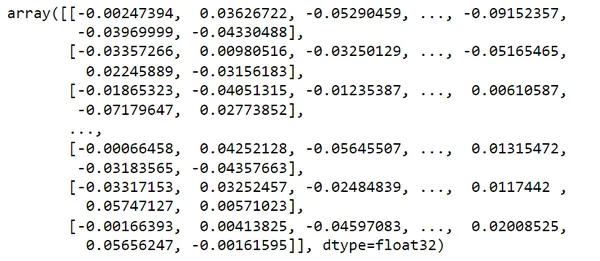

vectors = encoder.encode(df.text)

vectors # Step 2 : Build a FAISS Index for vectors

import faiss

index = faiss.IndexFlatL2(dim) # Step 3 : Normalize the source vectors and add to index

index.add(vectors)

index # Step 4 : Encode search text using same encoder

search_query = "looking for places to visit during the holidays"

vec = encoder.encode(search_query)

vec.shape

svec = np.array(vec).reshape(1,-1)

svec.shape # Step 5: Search for similar vector in the FAISS index

distances, I = index.search(svec, k=2)

distances

row_indices = I.tolist()[0]

row_indices

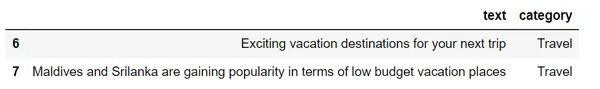

df.loc[row_indices]If we check out this dataset,

we will convert these text into vectors using word embeddings

Considering my search_query = “looking for places to visit during the holidays”

It is providing most similar 2 results related to my query using semantic search of Travel Category.

When you perform a search query, the database uses techniques like Locality-Sensitive Hashing (LSH) to speed up the process. LSH groups similar vectors into buckets, allowing for faster and more targeted searches. This means you don’t have to compare your query vector with every stored vector.

Retrieval

When a user queries the system, the same embedding model is used to create embeddings for the query. These query embeddings are then used to search the vector database for similar vector embeddings. The result is a troup of similar vectors, each associated with its original content source.

Challenges of Retrieval

Retrieval in semantic search shows several challenges like token limit imposed by language models like GPT-3. when dealing with multiple relevant data chunks, the exceeding of limit of responses takes place.

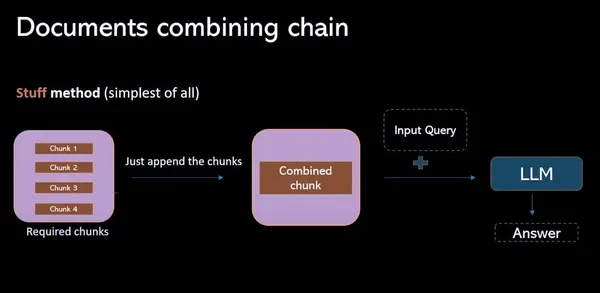

Stuff Method

In this model, It involves collecting all relevant data chunks from vector database and combining them into a prompt(individual). The main disadvantage of this process is the exceeding the token limit ,so that it results in incomplete responses.

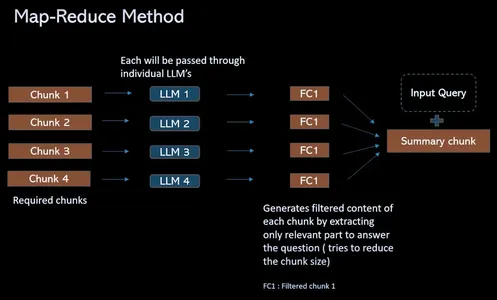

Map Reduce Method

To overcome the token limit challenge and streamline the retrieval QA process this process provides a solution that instead of combing relevant chunks into a prompt(individual), if there are 4 chunks. Pass all through discrete isolated LLMs. These questions provide contextual information that allows the language model to focus on the content of each chunk independently. This results in a set of single answers for each chunk. Finally a final LLM call is made to combine all these solo answers to find the best answer based on insights gathered from each chunk.

Work flow of ResearchBot

(1) Load Data

In this step, data, like text or documents, is imported and ready for further processing, making it available for analysis.

#provide urls to scrape the data loaders = UnstructuredURLLoader(urls=[ "", ""

])

data = loaders.load() len(data)(2) Split Data to Create Chunks

The data is divided into smaller, more manageable sections or chunks, facilitating efficient handling and processing of large text or documents.

text_splitter = RecursiveCharacterTextSplitter( chunk_size=1000, chunk_overlap=200

) # use split_documents over split_text in order to get the chunks.

docs = text_splitter.split_documents(data)

len(docs)

docs[0](3) Create Embeddings for these Chunks and Save them to FAISS Index

The text chunks are converted into numerical vector representations (embeddings) and stored in a Faiss index, optimizing the retrieval of similar vectors.

# Create the embeddings of the chunks using openAIEmbeddings

embeddings = OpenAIEmbeddings() # Pass the documents and embeddings inorder to create FAISS vector index

vectorindex_openai = FAISS.from_documents(docs, embeddings) # Storing vector index create in local

file_path="vector_index.pkl"

with open(file_path, "wb") as f: pickle.dump(vectorindex_openai, f) if os.path.exists(file_path): with open(file_path, "rb") as f: vectorIndex = pickle.load(f)(4) Retrieve Similar Embeddings for a Given Question and Call LLM to Retrieve Final Answer

For a given query, we retrieve similar embeddings and use these vectors to interact with a language model (LLM) in order to streamline information retrieval and provide the final answer to the user’s question.

# Initialise LLM with the necessary parameters

llm = OpenAI(temperature=0.9, max_tokens=500) chain = RetrievalQAWithSourcesChain.from_llm( llm=llm, retriever=vectorIndex.as_retriever()

)

chain query = "" #ask your query langchain.debug=True chain({"question": query}, return_only_outputs=True)Final Application

After Using all these stages( Document Loader, Text Splitter, Vector DB, Retrieval, Prompt) and building an application with the help of streamlit. We completed building our ResearchBot.

This is a section in the page, where the url’s of blogs or articles are inserted in it. I gave the links of latest Iphone mobiles released in 2023. Before Starting the building of this application ResearchBot, everyone will have a question that already we have the ChatGPT then why are we building this ResearchBot. Here’s the answer:

ChatGPT’s Answer:

ResearchBot’s Answer:

Here, My Query is “What is the price of Apple Iphone 15?”

This data is from 2023 and this data is not available with the ChatGPT 3.5 but we have trained our ResearchBot with the latest information about Iphone’s. So we got our requied answer by our ResearchBot.

These are the 3 Issues of Using ChatGPT:

- Copy Pasting the Article Content is a tedious job.

- We need an Aggregate Knowledge Base.

- Word Limit – 3000 words

Conclusion

We have witnessed the concepts of semantic search and vector databases in the real world scenario. The ability of our ResearchBot to efficiently retrieve answers from a Vector Database using Semantic Search, ResearchBot show the tremendous potential for deep LLMs(adv) in the realm of information retrieval and question-answering systems. We’ve made a super demanded tool that makes it easy to find and summarize important information with a high ability and search features. It’s a powerful solution for those seeking knowledge. This technology opens up new horizons for information retrieval and question-answering systems, making it a game-changer for anyone in search of data-driven insights.

Frequently Asked Questions

A. It is the Backbone of Modern Semantic Search Engines. Vector databases are specialized databases designed to handle high-dimensional vector data. They provide efficient ways to store and search high-dimensional data like vectors representing texts or other types depending on the complexity and granularity of the data.

A. A semantic search engine is better to interpret the meaning of a word. It can better understand query intent, it can generate search results that are more relevant to the searcher than what a traditional search engine may show.

A. FAISS is not a vector database itself, rather, it’s a vector search library. It is a vector search library and a standalone library that is used to perform vector similarity search. Some popular examples include FAISS, HNSW, and Annoy.

A. A large language model (LLM) is a type of artificial intelligence (AI) algorithm that uses deep learning techniques and massively large data sets to understand, summarize, generate and predict new content. These chatbots are having many skills at natural language understanding and conversation.

The media shown in this article is not owned by Analytics Vidhya and is used at the Author’s discretion.

Related

- SEO Powered Content & PR Distribution. Get Amplified Today.

- PlatoData.Network Vertical Generative Ai. Empower Yourself. Access Here.

- PlatoAiStream. Web3 Intelligence. Knowledge Amplified. Access Here.

- PlatoESG. Carbon, CleanTech, Energy, Environment, Solar, Waste Management. Access Here.

- PlatoHealth. Biotech and Clinical Trials Intelligence. Access Here.

- Source: https://www.analyticsvidhya.com/blog/2023/10/empower-your-research-with-a-tailored-llm-powered-ai-assistant/